Introduction: Validating the Agentic Bottleneck

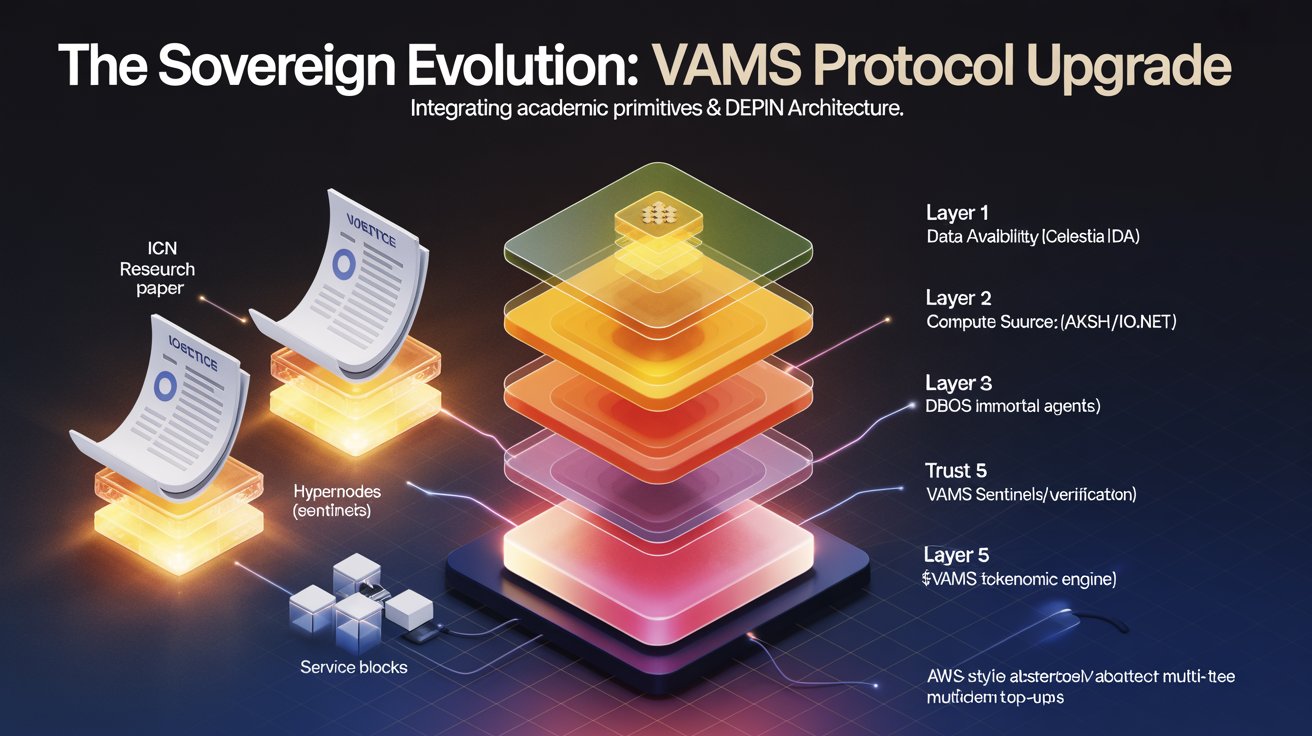

The convergence of autonomous AI agents and decentralized physical infrastructure (DePIN) has reached a mathematical and architectural inflection point. In the foundational VAMS documentation, we defined the "Usability Crisis" and the "Agentic Bottleneck"—the structural barriers preventing AI agents from effectively utilizing fragmented Web3 infrastructure.

Recently, the academic community has validated this core thesis. The research paper Impossible Cloud Network: A Decentralized Internet Infrastructure Layer independently identifies that the centralization of hyperscalers creates single points of failure, and that current Web3 alternatives lack the composability required for mainstream adoption. Furthermore, the paper rigorously outlines the exact latency and coordination requirements for "Agentic AI," noting that the iterative sense-think-act loop requires decentralized, edge-proximate compute to prevent critical failures.

At VAMS, our directive is to ruthlessly integrate superior mathematical and architectural models to harden our stack. By synthesizing the ICN framework into our architecture, we are upgrading the Verifiable and Agentic Modular Stack. Here is the technical roadmap for this integration.

1. The Blueprint Engine: Upgrading Layer 2 (Compute)

The Academic Insight: ICN proposes a "Resource Composition Layer" that dynamically decouples physical hardware from its capabilities. This creates logical resource units—compute, memory, networking, storage—that are reassembled via "Instance Blueprints".

The VAMS Upgrade: Currently, the VAMS Conditional L1 Router (CLR) routes complete workloads to specific monolithic DePIN providers, such as sending a GPU job to io.net or a container to Akash. We are actively upgrading the VAMS Gateway to include a Blueprint Composition Engine.

Instead of an agent requesting a monolithic provider instance, it will submit a mathematical resource requirement vector: V_{req}=\{C_{cpu},M_{ram},S_{storage},B_{network}\}

The VAMS Abstraction Engine will dynamically parse this blueprint and provision the logical units across multiple underlying DePINs simultaneously. An agent can now run its reasoning engine on Bittensor, its active memory on an Akash container, and its permanent state on Arweave. These are logically grouped into a single, unified elastic instance that expands or contracts based on real-time agent demand.

2. VAMS Sentinel Agents: Upgrading Layer 4 (Trust)

The Academic Insight: To solve the accountability gap in decentralized hardware, ICN utilizes a "HyperNode Network"—a decentralized validator set that continuously challenges hardware nodes, generates performance reports, and posts cryptographic proofs to a "Satellite Network" for data availability.

The VAMS Upgrade: VAMS already utilizes a "Decagon" Trust Aggregator, verifying Phala TEE execution and ERC-8004 identity. However, to enforce continuous hardware accountability, we are deploying VAMS Sentinel Agents.

These autonomous Sentinels will continuously ping our integrated DePINs (io.net, Akash) with cryptographic challenges to verify SLA guarantees. The Sentinels will anchor these performance proofs directly to Celestia, our Layer 1 Data Availability provider. This creates a real-time, mathematically proven hardware reliability metric. The CLR will integrate this continuous data stream to dynamically route around failing providers before a workflow even begins, mathematically minimizing the probability of execution failure.

3. DBOS Agentic Skill Blocks: Upgrading Layer 3 (Logic)

The Academic Insight: ICN introduces "Service Blocks," which are modular, plug-and-play software components built by third parties that can be deployed onto composed instances.

The VAMS Upgrade: VAMS utilizes the Database Operating System (DBOS) to grant agents "Immortality" via exactly-once execution semantics and L1 Merkle-root state anchoring. We are expanding DBOS to natively support Agentic Skill Blocks.

Developers will be able to publish permissionless, pre-compiled DBOS workflow modules—such as a "DeFi Arbitrage Skill" or a "Zero-Knowledge Data Scraping Skill"—to the VAMS network. When a creator deploys an agent, they will visually or programmatically stack these Service Blocks. The VAMS protocol will automatically provision the necessary underlying infrastructure via the Blueprint Engine, abstracting the hardware complexity entirely away from the AI developer.

4. Regional Edge-Routing: Upgrading the CLR & Economics

The Academic Insight: The ICN paper astutely notes that Agentic AI requires minimal latency to execute sense-think-act loops, heavily favoring decentralized edge-compute over centralized hyperscalers.

The VAMS Upgrade: High-Frequency Trading (HFT) agents on VAMS require sub-second finality. To accommodate this, we are upgrading the CLR and our Dynamic Emission Controller (DEC) to include geographic and latency-bound routing constraints. If an agent requires sub-50ms latency for a specific workflow, the CLR will restrict its blueprint composition strictly to DePIN edge-nodes within the target geographic region. To sustain this economically, the DEC will introduce Regional x402 Pricing, allowing hardware providers in high-demand edge locations to earn a premium in $VAMS. This perfectly aligns supply with programmatic agent demand.

Conclusion: The Sovereign Brain

The "It from Bit" philosophy remains our guiding star. Hardware is a commodity; the platform and the routing logic generate the true value. By integrating continuous HyperNode-style verification, logical resource abstraction, and latency-optimized edge routing, VAMS is not just building infrastructure—we are mathematically constructing the Sovereign Brain for the Agentic Web.